TL;DR

Your Google rankings don’t tell you if AI recommends you. Only 12% of AI citations match the top 10.

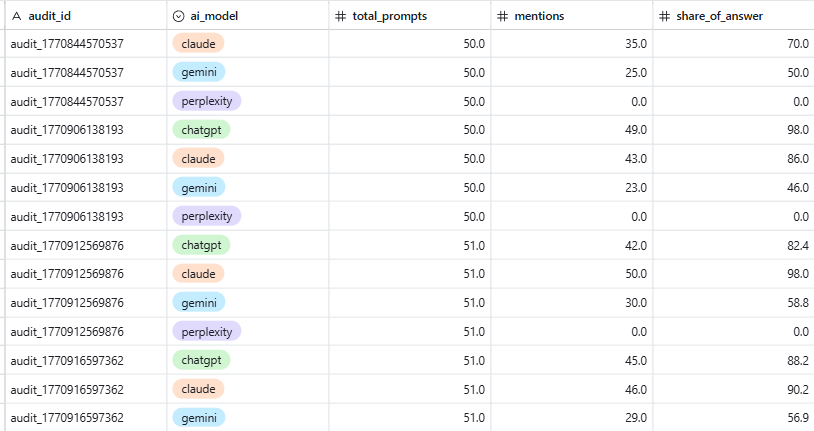

The audit: 20-30 buyer prompts → test across 5 AI platforms → score competitors → diagnose gaps → 90-day action plan. Takes 2-4 hours, zero cost.

The finding most teams miss: 85% of AI brand mentions come from third-party sources. Fix your website all you want. The real wins are G2, Reddit, YouTube, LinkedIn, and publications.

The honest caveat: AI results are non-deterministic. Two people asking the same question get different answers. One prompt triggers 6-20 sub-queries behind the scenes. You’re measuring probability, not position. That doesn’t make it useless. It makes it different from what you’re used to.

What it can do: $1.2M influenced pipeline in 90 days for a $10M ARR SaaS client.

An AI search visibility audit shows you where your B2B SaaS brand appears, gets recommended, or gets ignored across ChatGPT, Claude, Perplexity, Gemini, and Google AI Overviews. It takes 2-4 hours, costs nothing, and produces a prioritised fix list you can act on the same week.

We’ve run this for dozens of B2B SaaS companies since 2025. The five steps below are the same ones we use at VisibleIQ. One client used the findings to generate $1.2M in influenced pipeline within 90 days. Most companies we audit haven’t checked whether AI systems even know they exist. The ones that have checked aren’t much better off. Across our audits, the average B2B SaaS company appears in just 16% of buying-intent prompts across all five platforms.

Why Your Google Rankings Don’t Tell You What AI Systems Think

Google rankings and AI citations are diverging fast. Only 12% of AI citations match Google’s top 10 (Ahrefs, 2025), and the overlap dropped from 76% to 38% in under a year. Your SEO performance doesn’t predict whether AI recommends you. 73% of B2B buyers now use AI tools during vendor research (Averi.ai, 2026).

That gap isn’t stable. It’s growing. In mid-2025, 76% of Google AI Overview citations came from pages in Google’s top 10. By early 2026, that number dropped to 38% (Ahrefs). The systems are pulling apart.

The overlap between AI Overview citations and organic results sits at roughly 54% (Ahrefs). For any query where you rank well, there’s close to a coin-flip chance you’re not cited in the AI-generated answer for that same query.

Meanwhile, your buyers have already moved. 73% of B2B buyers now use AI tools like ChatGPT and Perplexity during vendor research (Averi.ai, 2026). 68% of B2B SaaS CMOs start their searches with AI tools before traditional search, tripled from the year prior (Wynter, 2026). And Google itself is cannibalising its own clicks: ~60% of Google queries are now zero-click, with organic CTR down to 40.3% in the US (Click-Vision, Mar 2026). When an AI Overview does appear, organic CTR drops by 61% (Seer Interactive, 2025).

We had a client ranking in the top 5 for 14 high-intent keywords. AI visibility across ChatGPT and Perplexity? Zero mentions on 11 of those 14 prompts. Google said they were winning. AI didn’t know they existed.

People tell you “just do good SEO” and the AI visibility will follow. That advice isn’t wrong. It’s just too vague to be useful. What does “good” mean here? Because what Google rewards and what AI systems reward are measurably different:

- Brand web mentions correlate with AI citations at 0.664. Backlinks sit at 0.218, nearly 3x weaker (Ahrefs, 2025).

- Most AI brand mentions come from third-party pages, not your own website (Airops, 2026). Your blog isn’t where AI finds you.

- Websites with original content earn 4.31x more AI citations per URL than directory listings (Yext, analysis of 17.2M citations).

- AI search traffic converts at a completely different rate: ChatGPT referrals convert at 15.9% vs Google organic at 1.76% (Seer Interactive, 2025). Low volume, high quality.

The disciplines are the same: SEO, content, digital PR. The signals those systems reward are different. That’s what this audit measures.

Step 1: Map the Buyer Prompts That Actually Drive Pipeline

Start with buyer prompts, not keywords. AI decomposes each prompt into 6-20 sub-queries behind the scenes (Google I/O 2025). Build 20-30 prompts across six categories and ensure your content covers the sub-query types that feed into AI answers. 60-70% of pipeline-influencing prompts fall into comparison and “best for” categories.

The starting point for an AI search audit is buyer prompts, not keywords. Buyers don’t type “revenue intelligence software” into ChatGPT. They ask “What’s the best revenue intelligence tool for mid-market SaaS with Salesforce integration?” Your audit needs to reflect how people actually use these tools.

Build a list of 20-30 prompts across these categories:

- “Best X for Y” prompts: “best [your category] for [segment/use case]”

- Comparison prompts: “[your brand] vs [competitor]” and the reverse

- Alternative prompts: “alternatives to [competitor]” and “alternatives to [your brand]”

- Evaluation prompts: “is [your brand] worth it” or “[your brand] pros and cons”

- Problem prompts: “how to fix [pain point your product solves]” or “why is [process] so slow”

- Category prompts: “what is [your category]” and “how to choose a [your category]”

Don’t guess at these. Pull them from your sales calls. What do prospects say they searched before booking a demo? That’s your prompt list.

Here’s what most audit guides don’t tell you: when a buyer types one prompt, the AI doesn’t run one search. It decomposes that single question into 6-20 sub-queries running in parallel behind the scenes (Google confirmed this at I/O 2025, calling it “query fan-out”). A prompt like “best CRM for mid-market SaaS” might trigger sub-queries across seven types: definition (“what is mid-market CRM”), comparison (“CRM A vs CRM B”), how-to (“how to evaluate CRM for SaaS”), use case (“CRM for SaaS sales teams”), objection (“is CRM X too expensive”), entity expansion (“CRM integrations with Salesforce”), and metric (“CRM pricing comparison 2026”).

Your brand needs to show up across enough of those sub-query types to make it into the final answer. Only 27% of fan-out sub-queries remain consistent across different searches. That means broad topical coverage beats surgical targeting of individual prompts.

We find that 60-70% of the prompts influencing B2B SaaS pipeline fall into comparison and “best for” categories. Start there, but make sure your content covers the sub-query types that feed into those answers.

Step 2: Test Your Brand Across Five AI Platforms

Test every prompt across ChatGPT, Claude, Gemini, Perplexity, and Google AI Overviews. Each platform has different retrieval mechanics and source preferences. Only 11% of domains get cited by both ChatGPT and Perplexity (Semrush, 2025). In our audits, the average company is visible on just 2 out of 5 platforms.

Each AI platform has its own retrieval mechanics, source preferences, and citation behaviour. Testing across all five tells you where gaps are platform-specific and where they’re systemic. In our audits, the average company is consistently visible on 2 out of 5 platforms. Only 9% are visible across all five.

Run your 20-30 prompts through:

- ChatGPT (latest model, web search enabled)

- Claude (claude.ai, with web search)

- Google Gemini (gemini.google.com)

- Perplexity (both default and Pro search)

- Google AI Overviews (search Google, look at the AI summary)

For each prompt on each platform, record four things:

- Mentioned? Does your brand appear in the response?

- Described correctly? Is your category and positioning accurate?

- Recommended? Are you on a shortlist or in a direct recommendation?

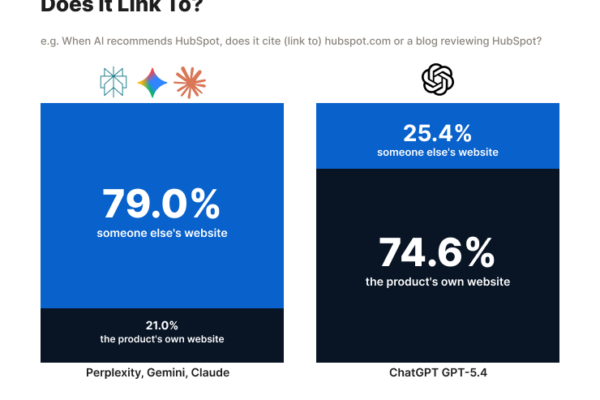

- Cited? Does the AI link to your site or a third-party page about you?

This takes 2-3 hours for 25 prompts across five platforms. Tedious, but necessary.

Why each platform behaves differently

Understanding the retrieval mechanics matters for interpreting your results.

ChatGPT answers 60% of queries from parametric knowledge alone, with no web search triggered at all. Only 18% of conversations trigger a web search. When it does search, 87% of its citations match Bing’s top 10 results, not Google’s (important if your SEO strategy is Google-only). ChatGPT is also developing “whitelisted” batches of domains per query type, where non-established sites in a category may be filtered out automatically. Turn 1 of a conversation is 2.5x more likely to trigger citations than turn 10.

Perplexity searches the live web in real-time for every query. Fresh content can appear in citations within hours. It cross-references multiple sources and uses a curated list of reputable domains. Perplexity’s top-10 cited sources are 46.7% Reddit (Averi.ai, 2026). Its smaller, selective index means quality matters more than quantity.

Google AI Overviews use Gemini to retrieve candidates, then score them for quality, coverage, and E-E-A-T signals. 52% of citations come from top-10 organic results, but 48% come from lower-ranking pages based on content quality and relevance. 40-61% of AI Overview responses contain bullet points or step-by-step formats. YouTube makes up 23.3% of cited sources (Semrush, 2025).

Claude uses web search with its own retrieval logic. Less public data on its citation preferences, which is exactly why you need to test it separately.

Results won’t be consistent. That’s the point.

Run the same prompt twice on ChatGPT and you’ll get different brands mentioned, different source links, different recommendations. This isn’t a bug. AI search is non-deterministic. There’s no fixed “position 3” like Google. Two buyers asking the same question at the same time can get different answers.

When we run the same prompt 10 times across platforms, the average brand appears in only 3 of those responses. The same buyer asking the same question tomorrow might get a completely different shortlist. SparkToro’s 2026 study (2,961 prompts across ChatGPT, Claude, and Google AI) confirmed this: AI gives a different recommendation list more than 99% of the time.

What this means for your audit: run each prompt at least twice per platform, on different days. You’re not looking for a fixed ranking. You’re looking for frequency. If you show up 7 out of 10 times on a prompt, that’s strong. If you show up once, that’s weak. Track the pattern, not the individual result.

Watch how each platform describes you. If ChatGPT calls you a “CRM tool” when you’re a revenue intelligence platform, that’s a classification problem. It directly determines which buyer prompts surface your brand.

Step 3: Score Your Competitors’ AI Visibility

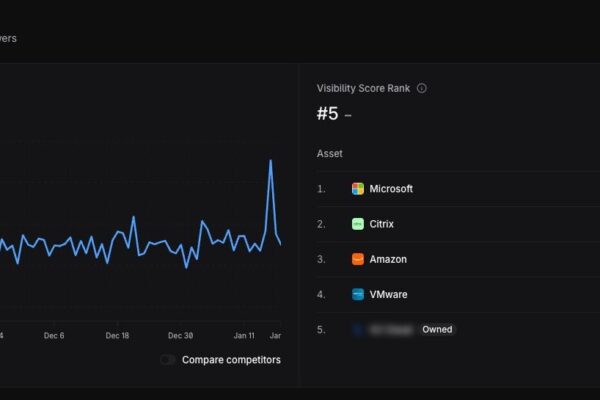

Run the same prompts for competitors and build a visibility matrix. Track share of voice, not just presence. In our audits, the category leader shows up in 4x more buying-intent prompts than the company requesting the audit.

Your visibility only means something relative to who else shows up. Running the same prompts for competitors reveals which brands dominate AI recommendations in your category, what share of voice they hold, and which prompts are winnable.

Build a matrix:

| Prompt | Your Brand | Competitor A | Competitor B | Competitor C |

|---|---|---|---|---|

| “best X for Y” | Mentioned | Recommended | Not mentioned | Cited |

| “[Brand] vs [Comp A]” | Described wrong | Recommended | N/A | N/A |

This tells you:

- Which competitors own the AI recommendations in your space

- Which prompts you’re absent from entirely

- Where you’re mentioned but described wrong (often worse than not showing up)

- Where competitors get cited with source links while you’re only mentioned in passing

Track share of voice, not just presence. If Competitor A appears in 9 out of 10 responses for “best [category] for [use case]” and you appear in 2, that gap is your priority.

The competitor matrix is where most companies have their real moment of clarity. In our audits, the category leader typically shows up in 4x more buying-intent prompts than the company requesting the audit. They assumed good rankings meant good visibility across the board. It doesn’t.

Step 4: Diagnose Why AI Systems Miss or Misclassify Your Brand

The most common failure points: missing third-party presence (the #1 gap in 71% of our audits), unclear entity definitions, poor content structure, inconsistent descriptions across platforms, and stale content. 85% of AI brand mentions come from third-party pages (Airops, 2026).

With the gaps mapped, you need to understand what’s causing them. AI systems choose what to cite based on signals that overlap with Google’s but weight differently. These are the failure points we see most often in B2B SaaS.

No clear entity definition. Your homepage says “the platform for modern revenue teams.” AI can’t categorise that. State your product category, target audience, and differentiator in the first two sentences of your homepage and landing pages. Be specific enough that a machine reading one paragraph knows what you do, who it’s for, and why it’s different. High entity density (explicit mention of brands, tools, categories by name) increases citation likelihood.

No third-party presence. This is the biggest one, and the #1 gap in 71% of our audits. 85% of brand mentions in AI answers come from third-party pages (Airops, 2026). If the only place talking about your product is your own website, AI systems don’t have enough independent sources to recommend you confidently. G2 reviews, Reddit discussions, YouTube comparisons, guest articles, LinkedIn commentary from your team: these are where AI models find the signals that lead to citations.

LLMs also cross-verify claims across sources. Google’s AGREE framework shows how AI reduces hallucination risk by checking whether information is consistent across independent sources. If your product description only exists on your own site, there’s nothing to cross-reference. If it exists on G2, Reddit, YouTube, and three publications saying the same thing, AI trusts it.

Missing competitor context. If your website never mentions competitors by name, AI can’t place you in comparison queries. You need “[Your Brand] vs [Competitor]” and “alternatives to [Competitor]” pages. These are entity relationship signals that tell AI how you fit in the market.

A warning here: brands that publish “best [category]” listicles with themselves ranked #1 are increasingly being detected and filtered. AI models are already filtering “spammed categories” of self-promotional listicles, and a broader crackdown on this pattern is coming. Write comparison pages that are genuinely useful, not thinly disguised ads.

Inconsistent descriptions across platforms. Homepage says “revenue intelligence platform.” G2 says “sales analytics tool.” LinkedIn says “AI-powered forecasting.” Three conflicting definitions. AI either picks the wrong one or skips you. Audit every external listing and align them.

Poorly structured content. 44.2% of AI citations come from the first 30% of a page (Kevin Indig, Growth Memo). If your answer sits at the bottom of a 3,000-word piece, it won’t get cited.

The citation data on content format is clear. Listicles make up 43.8% of ChatGPT page-type citations. Data tables get 2.5x more citations than prose. Pages with statistics see +22% visibility, and adding cited sources gives rank-5 sites a +115.1% visibility lift (Aggarwal et al., Princeton GEO study). 81% of AI-cited pages have schema markup (AccuraCast, 2025). Pages with FAQPage schema are 3.2x more likely to appear in Google AI Overviews (Frase.io, 2025).

Each section of your content needs to work as a standalone, retrievable unit. AI doesn’t cite pages the way Google ranks them. It retrieves passages. If a section can’t be understood without reading the three paragraphs above it, it won’t get extracted. Definitive, direct language gets cited 2x more than vague hedging.

Stale content. 76.4% of top-cited pages were updated within the last 30 days. AI systems favour content they can trust as current. Pages without dates, without recent updates, without current pricing or feature information get deprioritised. Add “last updated” dates and actually keep them current.

Good structure was always good SEO. Companies that were already writing clear, answer-first content are fine. Those who relied on long, keyword-stuffed pages for comprehensiveness need to restructure. AI systems penalise weak structure harder than Google ever did.

Step 5: Build a 90-Day Action Plan From Your Findings

Sort fixes into three phases: quick website fixes (weeks 1-2), content gap fills (weeks 3-6), and third-party presence building (weeks 7-12). Companies completing the full plan see citation rates increase 3.4x on average. One client generated $1.2M influenced pipeline within 90 days.

Sort findings into three phases: fix what’s broken, fill the gaps, build the presence that compounds.

Week 1-2: Fix what’s broken

- Rewrite your homepage opening: clear category, audience, and outcome in the first two sentences

- Align your product description across your website, G2, Capterra, LinkedIn, and Crunchbase

- Add “last updated: [date]” to your top 10 content pages and actually maintain them

- Restructure your highest-traffic pages so the direct answer appears in the first 200 words

- Add FAQPage schema to your key landing pages and pillar content

- Ensure every major section can be understood independently (node architecture)

Week 3-6: Fill the content gaps

- Create comparison pages for your top four competitors (genuinely useful ones, not self-promotional listicles)

- Create an “alternatives to [Your Brand]” page (control the narrative yourself)

- Build or update integration pages naming every platform you connect with

- Add data tables and statistics to your highest-value pages

- Start a G2 review campaign. If your competitor has 2,400 reviews and you have 40, the AI sees that difference

- Ensure author credentials and bylines are on every piece of content

Week 7-12: Build third-party presence

- Publish guest content on industry publications your category’s AI citations pull from

- Participate in relevant Reddit communities with genuine answers, not self-promotion. Reddit appears in 46.7% of Perplexity’s top-10 sources and 27% of ChatGPT’s internal search slots

- Create YouTube content for your category. Transcripts make your video content machine-readable, and AI Overviews pull heavily from YouTube

- Build LinkedIn thought leadership for your team’s key voices. Real people associated with your brand builds E-E-A-T signals that AI models reward

- Pitch original research to publications. Original data earns 4.31x more AI citations (Yext, 17.2M citations)

We ran this exact process with a $10M ARR B2B SaaS client. Within 90 days: $1.2M influenced pipeline, +63% increase in SQLs, +45% AI citation rate across priority prompts, and +18% higher demo conversion from AI-referred traffic compared to other channels.

Across all our clients, companies that complete the full 90-day plan see citation rates increase by an average of 3.4x. The gains compound. Third-party presence takes time to build, but once it’s there, AI systems find you from multiple angles.

How to track progress

AI visibility monitoring is still maturing, but tools exist. Otterly.AI tracks brand mentions across AI platforms. GenRank.io monitors ChatGPT specifically. Similarweb has an AI Search Intelligence product. ZipTie.dev analyses citation patterns.

For the audit itself, you don’t need any of these. A spreadsheet and 2-3 hours of manual testing gives you the data. The tools become useful for ongoing monthly monitoring after you’ve made changes and want to track whether your citation frequency is improving.

The metrics that matter: inclusion rate (% of prompts where you’re mentioned), citation frequency (how often across repeated tests), share of voice vs competitors, and whether you’re described correctly. Don’t chase raw citation count. Track whether AI recommends you in buying situations.

Re-run the audit monthly for the first quarter, then quarterly after that. AI retrieval patterns shift. What’s cited today might not be next month.

See where you stand in AI search.

Your free AI visibility audit covers ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews.

Book my discovery call →When to Run This Yourself vs. Bring In Help

DIY if you have 2-4 hours and fewer than 10 competitors. Bring in a specialist if gaps are significant, your category is crowded, or you need ongoing monitoring. The audit is straightforward. The third-party presence work is where most teams need help.

Do it yourself if:

- You have 2-4 hours and someone who knows your competitive landscape

- Your category has fewer than 10 direct competitors

- You want to see the current state before deciding whether to invest further

Bring in a specialist if:

- You’ve found gaps but don’t know how to prioritise the fixes

- Your category is crowded and prompt coverage is complex

- You need to compress months of work into weeks

- You need ongoing monitoring, not a one-off snapshot

This audit works best for: B2B SaaS companies with established competitors and an existing content footprint that should be producing AI visibility but isn’t. Marketing teams, demand gen leads, and content strategists get the most from it.

Skip it if: You’re pre-revenue without competitors to benchmark, or your category is so niche that AI systems haven’t indexed the space yet. If buyers aren’t asking about your category in AI tools, an audit won’t find much.

The five steps above are the straightforward part. What separates companies that see pipeline impact from those that don’t is the third-party presence work in Step 5. Most teams can fix their own website. Building visibility across the platforms that AI actually cites from is where it gets harder, and where most of the value sits.

Frequently Asked Questions

How often should I run an AI search visibility audit?

Monthly for the first quarter after making changes, then quarterly. Perplexity refreshes its index weekly. ChatGPT updates its search and training data periodically. Google AI Overviews shift with algorithm changes. Quarterly audits catch drift before it affects pipeline.

Does ranking well on Google guarantee I’ll appear in AI answers?

No. The overlap between Google rankings and AI citations is small and shrinking. ChatGPT’s citations actually match Bing’s top 10 at 87%, not Google’s. AI systems weight brand mentions, third-party presence, and content structure differently from Google’s ranking algorithm. We’ve seen companies on page one for competitive terms that are completely absent from AI recommendations.

AI results change every time I ask. How can this audit be reliable?

It can’t be reliable the way Google rankings are. That’s the point. AI search is probabilistic, not positional. You’re not tracking “we rank #3.” You’re tracking “we appear in 7 out of 10 responses for this prompt.” Run each prompt multiple times across different days. The pattern is the data. A brand that shows up 80% of the time on a buying prompt is in a fundamentally different position from one that shows up 10%, even if individual responses vary.

What’s the difference between this and a traditional SEO audit?

An SEO audit measures crawlability, rankings, and backlinks. This audit measures whether AI systems mention, describe, and recommend your brand in buying-intent conversations. The disciplines overlap: both involve technical SEO, content strategy, and off-page work. But the signals they measure are different. You need both.

Can I improve AI visibility without changing my website?

Partly. Fixing external listings for consistency helps. Getting more third-party mentions and reviews helps. But the biggest gains come from two things: restructuring on-site content so answers are easy to extract, and building real presence on the third-party platforms AI systems cite from.

Should I create “best [category]” listicles with my brand at #1?

Be careful. Self-promotional listicles where you rank yourself first are being detected and filtered by AI models. Multiple AI platforms are already filtering “spammed categories” of these, and a broader crackdown is coming. Create comparison content that’s genuinely useful and honest. AI systems are getting better at recognising content designed to game them.

How is this different from AEO or GEO services?

We don’t use AEO or GEO branding. The disciplines are SEO, content marketing, and digital PR. What’s different is the signals: brand mentions over backlinks, extractability over comprehensiveness, third-party presence over domain authority alone. 80% of the work is solid SEO done well. The 20% where AI search diverges is where the competitive edge sits. If an agency can’t tell you what they do differently from traditional SEO, they’re probably just relabelling it.

Last updated: March 2026. Last reviewed for accuracy: March 2026.